Provider example

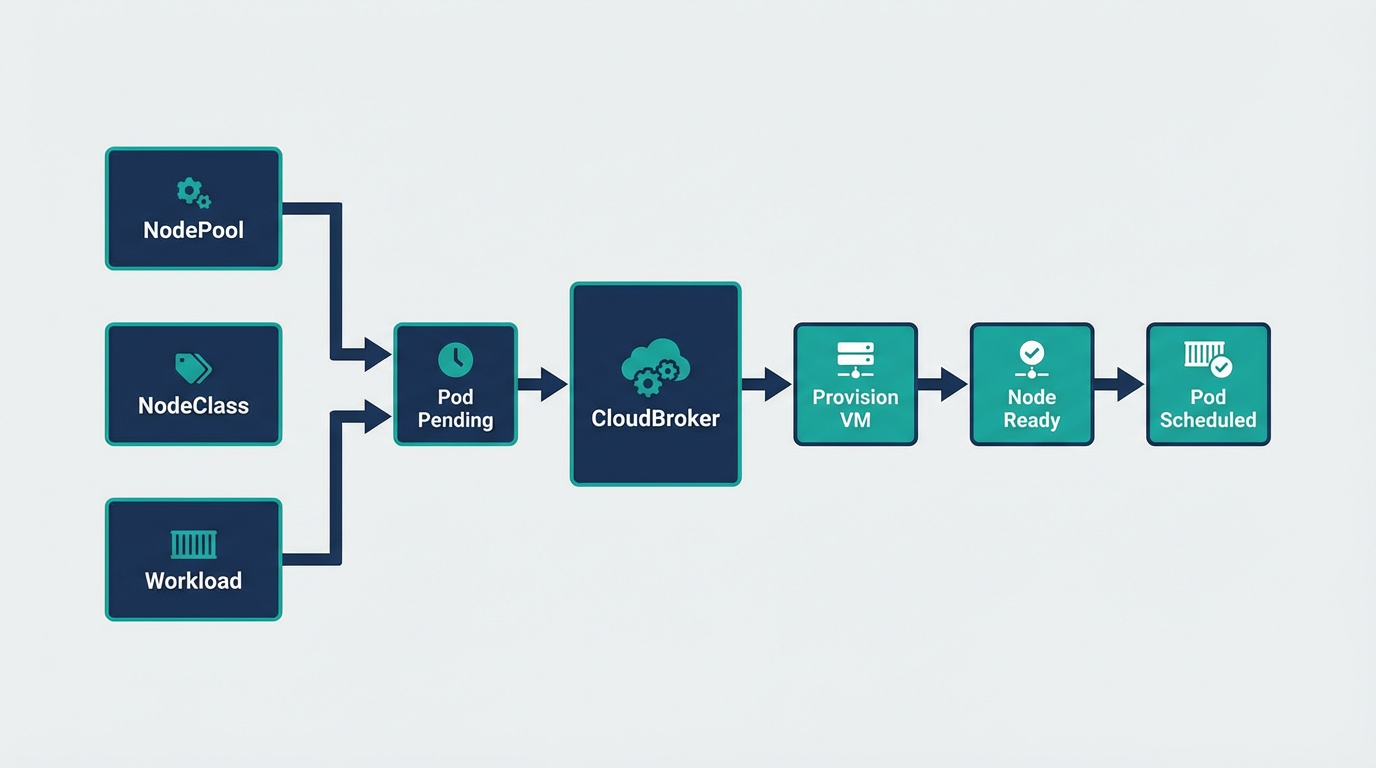

AWS — NodePool, NodeClass & Workload

Full manifests and kubectl commands for Amazon Web Services.

Replace placeholders

<HOST_TAILSCALE_IP>→ Kind control-plane Tailscale IPami-xxxxxxxx→ AWS AMI IDsubnet-xxxxxxxx→ VPC subnet ID

Ensure secrets exist: tailscale-auth, AWS credentials.

1. Create secrets

# Tailscale auth key (required by NodeClass)

kubectl create secret generic tailscale-auth --from-literal=authkey="<YOUR_TAILSCALE_AUTHKEY>" -n default

# AWS credentials (IAM user needs EC2 permissions)

kubectl create secret generic aws-credentials \

--from-literal=AWS_ACCESS_KEY_ID="<YOUR_AWS_ACCESS_KEY_ID>" \

--from-literal=AWS_SECRET_ACCESS_KEY="<YOUR_AWS_SECRET_ACCESS_KEY>" \

-n default2. NodePool

apiVersion: cloudburst.io/v1alpha1

kind: NodePool

metadata:

name: aws-nodepool

namespace: default

spec:

requirements:

regionConstraint: "ANY"

arch: ["x86_64"]

maxPriceEurPerHour: 0.15

allowedProviders: ["aws"]

limits:

maxNodes: 3

minNodes: 0

template:

labels:

cloudburst.io/nodepool: "aws-nodepool"

cloudburst.io/provider: "aws"

disruption:

ttlSecondsAfterEmpty: 60

ttlSecondsUntilExpired: 3600

weight: 13. NodeClass

apiVersion: cloudburst.io/v1alpha1

kind: NodeClass

metadata:

name: aws-nodeclass

namespace: default

spec:

aws:

region: "eu-west-1"

ami: "ami-xxxxxxxx"

subnetID: "subnet-xxxxxxxx"

accessKeyIDSecretRef:

name: aws-credentials

key: AWS_ACCESS_KEY_ID

secretAccessKeySecretRef:

name: aws-credentials

key: AWS_SECRET_ACCESS_KEY

join:

hostApiServer: "https://<HOST_TAILSCALE_IP>:6443"

kindClusterName: "cloudburst"

tokenTtlMinutes: 60

tailscale:

authKeySecretRef:

name: tailscale-auth

key: authkey

bootstrap:

kubernetesVersion: "1.34.3"4. Workload (triggers burst; targets AWS nodes)

apiVersion: v1

kind: Pod

metadata:

name: aws-workload

namespace: default

spec:

containers:

- name: workload

image: busybox:1.36

command: ["sleep", "infinity"]

resources:

requests:

cpu: "1500m"

memory: "2Gi"

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: cloudburst.io/provider

operator: In

values: ["aws"]5. Apply and verify

# Save the manifests above to aws-example.yaml, then:

kubectl apply -f aws-example.yaml

# Watch NodeClaim creation

kubectl get nodeclaims -w

# Once node is Ready, verify pod

kubectl get pods -o wide

kubectl get nodes -l cloudburst.io/provider=aws